MCP is enterprise infrastructure, not a developer convenience. Treat it with the same governance discipline as your API gateway or identity provider.

Slug: mcp-enterprise-infrastructure-security-governance-2026

Executive Summary

The Model Context Protocol (MCP), launched by Anthropic in late 2024, has achieved in 18 months what most standards take a decade to accomplish: genuine industry-wide adoption, backed by OpenAI, Google, Microsoft, and AWS now governed by the Linux Foundation. As of March 2026, MCP operates in production at hundreds of enterprises, powers agentic workflows at scale, and has become the de-facto integration layer for enterprise AI. But rapid adoption has outpaced governance. Most security teams haven't audited their MCP deployments. Most CTOs don't know how many MCP servers are running in their organization. This article covers what MCP actually does, why it matters for enterprise architecture, the three security vectors that keep RSA 2026 researchers up at night, and a practical governance framework to implement before an incident forces your hand.

3 key insights:

- MCP solves the quadratic integration problem: without it, connecting N agents to M tools requires N×M custom connectors. With MCP, you build once and connect everywhere.

- The protocol has moved faster than enterprise security controls most deployments are over-permissioned, unaudited, and invisible to IT governance teams.

- The enterprises pulling ahead aren't those with the best models they're those with the cleanest, most governed MCP architecture.

3 actions to take :

- Conduct an MCP server inventory: which servers are running, who deployed them, what permissions they hold.

- Apply authentication, scoped permissions, and observability to every server before the next agent deployment.

- Designate an MCP governance owner the role doesn't exist in most org charts yet, and that's the problem.

Risk if ignored: Shadow Agents unvetted AI agents accessing critical systems through ungoverned MCP servers replicate the Shadow IT explosion of 2015, but with execution capabilities, not just data access.

Introduction

In November 2024, Anthropic quietly released an open-source specification called the Model Context Protocol. The goal was modest: standardize how AI assistants connect to external tools and data sources. No custom connectors, no brittle middleware. One protocol, any model, any tool.

Sixteen months later, MCP has become something no one fully anticipated: enterprise infrastructure. Not a developer convenience. Not an experimental API. Infrastructure the kind that, when it goes down or gets compromised, takes business operations with it.

As of March 9, 2026, the MCP core maintainers published their updated roadmap, acknowledging the shift directly: "MCP has moved well past its origins as a way to wire up local tools. It now runs in production at companies large and small, powers agent workflows, and is shaped by a growing community."

The question for enterprise leaders isn't whether to adopt MCP. Most organizations already have. The question is whether you're governing it or whether it's governing itself.

Current landscape: From experiment to operating layer

The numbers are instructive. MCP server downloads grew from roughly 100,000 in November 2024 to over 8 million by April 2025. The ecosystem now counts over 5,800 servers and 300 clients. Industry heavyweights OpenAI, Google DeepMind, Microsoft, AWS have all formally committed to the standard. In December 2025, Anthropic donated MCP to the Linux Foundation's newly formed Agentic AI Foundation, alongside OpenAI and Block as co-founders.

BCG's characterization is precise: MCP is "a deceptively simple idea with outsized implications." Without MCP, integration complexity scales quadratically. Each new AI application connecting to a new data source or tool requires a custom integration. Ten AI applications and 100 tools: potentially 1,000 integrations to build and maintain. With MCP, that scales linearly. Build a server once; every MCP-compatible agent connects through it.

This efficiency gain has driven adoption faster than most enterprise technology ever moves. Salesforce, ServiceNow, Workday, Accenture, Deloitte over 50 enterprise partners are now building or deploying MCP-enabled systems.

But here's what the adoption metrics don't capture: most of this deployment is happening without enterprise-grade governance in place. The protocol prioritized interoperability over security in its initial design. The community is catching up but production is already ahead of the guardrails.

The architecture: What MCP actually does

Understanding MCP's architecture is prerequisite to governing it.

MCP is a client-server protocol. In an enterprise context:

The host is your AI orchestrator Gemini Enterprise, Claude for Enterprise, Microsoft Copilot, or a custom-built agent framework. It manages LLM interactions and user conversations.

The client is built into the host. It initiates connections to MCP servers on behalf of the user or the agent.

MCP servers are lightweight processes that expose specific capabilities: read/write access to a Jira instance, Confluence knowledge base, internal database, or customer CRM. Each server defines the tools it provides, the resources it exposes, and the prompts it can generate.

The critical insight for CTOs: MCP servers are execution endpoints. They don't just retrieve information they can modify systems. An agent connected to a Jira MCP server can create tickets, update statuses, reassign work, close issues. An agent connected to an internal database server can write records, not just read them.

This is the architectural shift that most executive conversations about AI integration miss. RAG retrieves. MCP acts.

The three security vectors RSA 2026 is watching

Fewer than 4% of MCP-related RSA 2026 conference submissions focused on opportunity. The security community is concentrated almost entirely on exposure. Three vectors dominate the conversation:

1. Over-permissioning

MCP servers are frequently deployed with broader permissions than the agent actually needs. A read-only use case gets a read-write server. An internal knowledge assistant gets access to production systems. This is the "least privilege" problem, familiar from cloud IAM, now replicated at every MCP server endpoint.

2. Tool poisoning and prompt injection

Malicious content in the systems MCP servers connect to can manipulate agent behavior. An attacker who can inject content into a Confluence page or a Jira ticket that your agent reads can potentially redirect its actions. MCP servers that connect to user-generated content (wikis, helpdesks, email) are particularly exposed.

3. Shadow agents and ungoverned servers

One RSA session will demonstrate how an MCP vulnerability could enable remote code execution and full takeover of an Azure tenant. More broadly, researchers warn that community-built MCP connectors are entering enterprise environments without security review. Developers experimenting with AI tooling deploy MCP servers on local machines or unregistered infrastructure. IT governance teams don't know they exist. The attack surface expands invisibly.

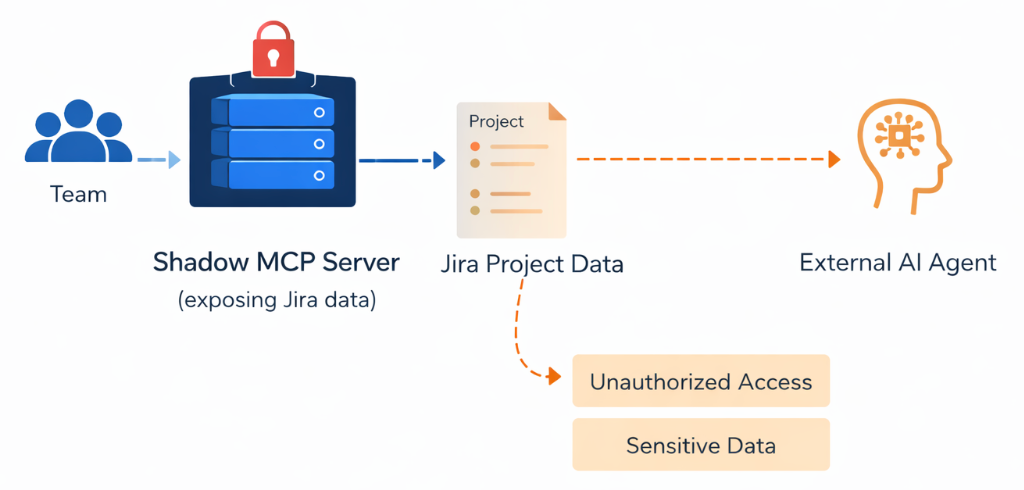

Scenario: The Shadow MCP server

Consider a simple situation.

A team deploys an MCP server to expose Jira project data for an internal AI assistant.

The server is created quickly for experimentation and authentication is weakly configured.

An external agent discovers the MCP server and invokes the exposed tools.

Suddenly, sensitive project data becomes accessible outside the organization.

In this situation:

- the model behaves correctly

- the vulnerability lies in the tool exposure layer

This is the agentic equivalent of the shadow API problem that many enterprises faced during the early API economy.

The governance framework: Three controls before you scale

The organizations that will scale MCP safely in 2026 are those implementing three foundational controls now, before incident pressure forces it.

Control 1: Inventory and classification

You cannot govern what you don't know exists. The first step is a complete MCP server inventory: every server currently deployed, by whom, connecting to what systems, with what permissions. This isn't a one-time audit it needs to become a continuous practice as new servers are deployed. Classify each server by the sensitivity of the systems it touches: tier 1 (customer data, financial systems), tier 2 (internal operations), tier 3 (non-sensitive tools).

Control 2: Authentication, scoped permissions, and observability

For each MCP server starting with tier 1 implement three controls: SSO-integrated authentication (the March 2026 roadmap lists this as a priority area), least-privilege permission scoping (the server can only do what the specific use case requires), and structured logging (every tool call, every data access, every action, with attribution to the user or agent that triggered it). Without logs, you cannot do incident response. Without scoped permissions, you cannot contain a compromise.

Control 3: A governance owner

The role doesn't exist in most org charts. Someone needs to own the MCP inventory, the security standards for server deployment, the process for approving new servers before they connect to enterprise systems, and the relationship with the security team. In the organizations deploying MCP at scale today, this role is emerging organically often falling to a platform engineering lead or an AI infrastructure owner. Making it explicit accelerates the maturity curve significantly.

Field pattern: What production MCP architecture looks like

Across organizations deploying MCP in production, a consistent architectural pattern is emerging. Rather than allowing individual teams to deploy servers ad hoc, the leading practice is a centralized MCP gateway that brokers all agent-to-server connections.

The gateway enforces authentication for every server connection. It applies permission policies ensuring agents can only access the servers their use case requires. It logs every interaction with structured metadata. And it provides the single point of governance that security and compliance teams need to audit AI agent activity.

This mirrors what Kubernetes did for container orchestration: providing a governance layer over a set of independently useful but hard-to-control components. AWS and IBM have independently identified orchestration layers as the critical infrastructure for multi-agent systems.

The practical implication: don't let MCP server deployment become a free-for-all. Centralize governance before you scale deployment.

Implications: The integration advantage becomes durable

The organizations building clean MCP architecture now are accumulating an advantage that compounds. Well-governed MCP infrastructure means:

- New agents can be deployed in days, not months, because the integration layer already exists and is already trusted by IT and security.

- Agent performance improves continuously because the data and tool connections are reliable, monitored, and maintained.

- Compliance with emerging AI regulation the EU AI Act's full enforcement deadline is August 2026 is more tractable when every agent action is logged with attribution.

Gartner projects that 40% of enterprise applications will include task-specific AI agents by end of 2026, up from less than 5% in 2025. The velocity is extraordinary. The organizations that have governed MCP infrastructure in place will deploy into that expansion. The ones that don't will be rebuilding under pressure.

Case study: Centralized MCP governance at scale

In one large European retail organization I support, the initial MCP deployment pattern was decentralized: individual teams built servers for their specific use cases IT support, procurement, internal communications without coordination. Within three months, there were nine MCP servers running across the organization, with inconsistent authentication, no centralized logging, and permission scopes that had grown beyond the original use case requirements.

The architecture review identified the exposure. The remediation followed the three-control framework: a complete inventory with tier classification, standardized authentication and permission scoping applied server by server (prioritizing those touching customer and operational data), and a designated platform engineering owner responsible for approving new server deployments.

The outcome isn't just security improvement it's architectural clarity. The organization now has a governed integration layer that accelerates new agent deployments rather than creating new risk with each one. The MCP gateway handles authentication, logging, and permission enforcement centrally. New use cases connect in days.

The lesson: governance imposed after incident is exponentially more expensive than governance designed in from the start.

Key takeaways

- MCP is enterprise infrastructure, not a developer convenience. Treat it with the same governance discipline as your API gateway or identity provider.

- The security exposure is real and documented: over-permissioning, tool poisoning, and shadow agents are the three vectors to address immediately.

- The governance framework is three controls: inventory + classification, authentication + scoped permissions + observability, and a designated governance owner.

- The centralized MCP gateway pattern a single brokered connection layer for all agent-to-server traffic is the emerging best practice for organizations deploying at scale.

- Organizations with clean MCP architecture will deploy the next wave of AI agents faster and more safely than those building governance after the fact.

Conclusion

MCP has achieved something remarkable: genuine industry-wide adoption in under 18 months, driven by technical elegance and clear enterprise demand. The protocol solves a real problem AI integration complexity and it solves it well.

What it doesn't solve is governance. That's the work in front of enterprise leaders right now. The window for proactive governance is open, but it's narrowing. Shadow agents are already appearing in organizations that moved fast on deployment and slow on oversight.

The MCP roadmap for 2026 explicitly lists enterprise readiness audit trails, SSO-integrated auth, gateway behavior as a priority area. The governance tooling is coming. The question is whether you implement it before or after your first incident.

Build the inventory. Scope the permissions. Designate the owner. The architecture you govern today is the infrastructure you scale confidently tomorrow.

Author: Godwin Avodagbe Deputy Director Digital Transformation, GALEC (E.Leclerc Group, ~€60B revenue). Founder, eKoura & HitoTec. Cambridge Judge Business School CTO Programme. Specialises in enterprise AI architecture and large-scale digital transformation for European retail.

Comments ()