AI Transformation: Why 93% of the Obstacles Are Human, Not Technical

📌 This article is written for CTOs, CDOs, and tech executives leading AI deployments in production. It draws on field patterns and research published between November 2025 and February 2026.

Everyone talks about the technology. No one talks about the real problem.

You just validated your proof of concept. The model performs. The infrastructure holds. The budget is approved. And yet, six months later, your AI agent is gathering dust in a corner of your architecture while your teams keep working exactly as they did before.

I've lived this. And I've watched it repeat itself in nearly every organization deploying AI for the first time.

It's not a GPU problem. It's not a model problem. It's a human problem.

The data confirms it, brutally: in the Data & AI Leadership Exchange 2026 survey — conducted with over 600 CDOs and CAIOs — 93% of respondents identify culture and change management as the primary obstacle to AI adoption. Only 7% blame the technology.

As of: February 2026 — Source: Data & AI Leadership Exchange 2026 Executive Benchmark Survey

Read that number again. 93%. The technology is solved. The problem is us.

What the headlines miss

The World Economic Forum announces 170 million new jobs created by AI by 2030. Consultants sell you 18-month transformation roadmaps. Vendors promise ROI in 90 days.

What they don't tell you: the main reason AI projects fail is neither the choice of model, nor data quality, nor cloud provider.

It's that nobody explained to the HR team why their validation process was about to change. Nobody prepared the middle manager whose role is evolving. Nobody answered the fundamental question every employee is silently asking: "Is this AI going to replace me?"

The counter-intuitive insight: Organizations that succeed with AI transformation are not those with the best model. They're the ones with the best change management program — and who started building it before they even chose their tech stack.

The blind trust paradox

There's another equally revealing figure, published in Informatica's CDO Insights 2026 report (600 global data leaders): 65% of employees say they trust the data powering their AI tools — but most lack the literacy to question it.

As of: January 2026 — Source: CDO Insights 2026, Informatica / Salesforce

This blind trust creates two concrete risks:

- The over-delegation risk: teams let the AI decide without verifying, which amplifies data errors downstream.

- The under-adoption risk: the most experienced employees — often the most skeptical — reject the tool on principle, without giving it a real chance.

In both cases, ROI collapses. And the technical leadership inherits a problem that no fine-tuning will fix.

The 3 field patterns that consulting firms don't document

Here's what I observe on the ground in enterprise deployments, beyond the POC use cases:

Pattern 1 — The "silent bypass"

Three weeks after launch, teams have found a way to work around the AI agent while appearing to use it. They feed the system with minimal inputs so that outputs are unusable, which gives them justification to go back to the old method. This behavior is never malicious. It's the rational response of an employee who hasn't understood why their work is changing.

What it reveals: meaning was never given. The transformation was framed as a technical decision, not as a professional evolution.

Pattern 2 — The unembedded middle manager

Leadership has signed off. Front-line teams have been trained. But the middle managers — the ones who bridge the two — were never involved. Result: they don't champion the new tool, they don't use it themselves, and their teams follow their implicit signal.

What it reveals: AI transformation is won or lost at middle management, not in boardrooms.

Pattern 3 — Training too late, too generic

Training sessions arrive after deployment, last two hours, and are delivered by IT teams who don't speak the business language. Employees leave with internal certifications they can't translate into daily usage.

What it reveals: AI upskilling must be role-specific, use-case-grounded, and begin before go-live — not after.

BCG documented this in February 2026: the companies realizing the most value from AI are consistently those with the most ambitious upskilling programs, started earliest.

As of: February 2026 — Source: "AI Transformation Is a Workforce Transformation", BCG, 2026

The "Human First" framework for AI deployments

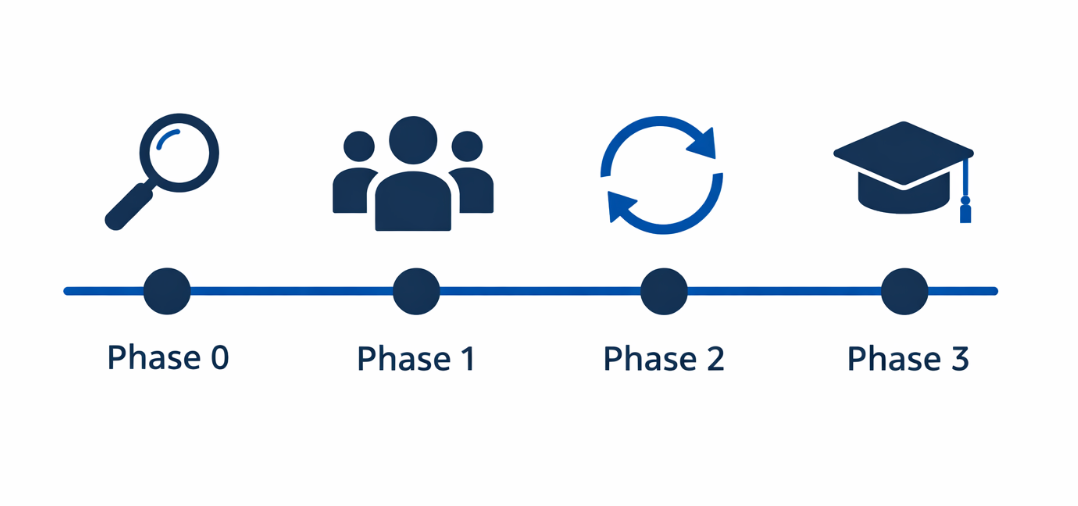

Here's the structure I apply on enterprise deployments to address human obstacles before they become production problems.

Phase 0 — Before the code (weeks -8 to -4)

Map the resistance, not just the use cases.

Before choosing your model, run individual interviews with end users (15 to 20 minutes, no questionnaire). Ask a single question: "What changes for you if this project succeeds?"

The answers will give you your real change management backlog: the fears, the habits to deconstruct, the benefits to highlight.

Deliverable: a "resistance map" per user persona, shared with the executive sponsor.

Phase 1 — Ambassadors before users (weeks -4 to 0)

Identify 3 to 5 early adopters per business team. Not necessarily the most tech-savvy — often the most respected. Give them early access, involve them in testing, and train them to explain the tool to their peers.

These ambassadors do more for adoption than a month of corporate communications.

Phase 2 — Deployment with a short feedback loop (weeks 1 to 4)

Bi-weekly sprint reviews with users. Not to collect NPS scores — to gather verbatims and iterate in real time. An AI agent that doesn't serve the right use cases in the first few weeks dies from its bad reputation.

Phase 3 — Business-anchored upskilling (weeks 4 to 12)

Train by role, by scenario, in 30 minutes maximum. An AI training session that exceeds one hour without concrete use cases loses 80% of its impact within 48 hours.

Embed sessions into existing rituals: standups, weekly reviews, one-on-ones. AI becomes normal when it's discussed normally.

What this means for your roadmap

If you're leading an AI deployment in 2026, here are the three decisions this should change:

1. Budget change management as a technical line item. In projects that succeed, change management represents 15% to 25% of total budget — not a "communications" line cut when things run over.

2. Put an HR leader or a transformation officer in your AI product team. Not as an observer. As a co-pilot. Architecture decisions have human implications that CTOs alone don't always see.

3. Measure adoption, not just performance. Your AI dashboards track latency, accuracy, cost. Do they track actual usage rates, user satisfaction, abandonment rates? If not, you're flying blind.

FAQ — The questions you're actually asking

Will AI really destroy jobs in my organization? WEF 2026 data projects 170 million new roles created and 92 million displaced — a net gain of 78 million globally. In organizations that succeed, AI redefines roles rather than eliminating them. The key is to actively redesign positions around new AI capabilities rather than letting change happen without a framework.

How long does it take for a team to genuinely adopt an AI agent? Based on field patterns observed across enterprise deployments, the full adoption cycle (regular, autonomous usage) ranges from 3 to 6 months depending on teams' digital maturity and the quality of the accompanying program. The first 90 days are critical.

Should everyone be trained at the same time? No. The wave approach — ambassadors first, core teams next, full organization last — reduces systemic resistance and allows you to iterate on the pedagogy between waves.

How do you measure resistance to change before deployment? Key indicators to watch: participation rate in co-design sessions (engagement signal), number of workarounds reported in the first few weeks (passive resistance signal), and escalation rate to management (organizational stress signal).

The bottom line

Enterprise AI transformation is not an engineering problem. It's a trust, meaning, and accompaniment problem. Organizations that understand this before writing a single line of code are the ones that deliver measurable ROI. The others spend their quarterly reviews explaining why their AI agent is "still being optimized."

The real KPI of a successful AI deployment is not model accuracy. It's the actual adoption rate at 90 days.

If this article was useful, subscribe to my newsletter to receive weekly insights from what I apply in production at large-scale retail organizations.

Sources

Secondary — Data & AI Leadership Exchange 2026 Executive Benchmark Survey — squarespace.com — 2026-01 — https://static1.squarespace.com/...Secondary — CDO Insights 2026: Data governance and the trust paradox — Informatica / Salesforce — 2026-01-27 — https://www.informatica.com/blogs/cdo-insights-2026Secondary — AI Transformation Is a Workforce Transformation — BCG — 2026-02 — https://www.bcg.com/publications/2026/ai-transformation-is-a-workforce-transformationSecondary — Davos 2026: key takeaways on AI, skills and workforce reinvention — PeopleManagement / WEF — 2026-01 — https://www.peoplemanagement.co.uk/article/1946227Secondary — AI Workforce Trends 2026 — Gloat — 2025-12 — https://gloat.com/blog/ai-workforce-trends/

Comments ()